Artificial Intelligence (AI) offers us possibilities as infinite as its applications and fields. One of the most beneficial for several industries–such as security and service production- and even for our daily lives–is computer vision, which mainly consists of programming our computers to read their visual environment.

Its characteristics, however, are complex and are worth knowing in depth. The following article will tell you a little more about the “eyes” of artificial intelligence.

Content

- What is computer vision?

- How does computer vision work?

- The evolution of computer vision

- Computer vision applications

- The future of computer vision and AI

What is Computer Vision?

Computer vision is the branch of Artificial Intelligence responsible for digital systems that detect and process visual information, that is, all kinds of data learned from digital images, videos, and other elements.

Just as artificial intelligence seeks to replicate, in a way, the human brain, computer vision takes as an example the complexity of human vision and the way it works. Thus, state-of-the-art algorithms teach computers to identify images and analyze them at the level of a pixel so that they can interpret them and perform actions or propose recommendations based on what they see.

While human vision relies on optic nerves and retinas, computer vision operates through cameras, databases, and, as mentioned above, advanced algorithms. Thanks to recent advances in neural networks and deep learning, computer vision has also surpassed people in its visual capabilities. In record time, its devices receive visual information from databases, learn to recognize different categories of objects and analyze thousands of real products or activities, being able to detect details imperceptible to the human eye.

Today, we use computer vision for:

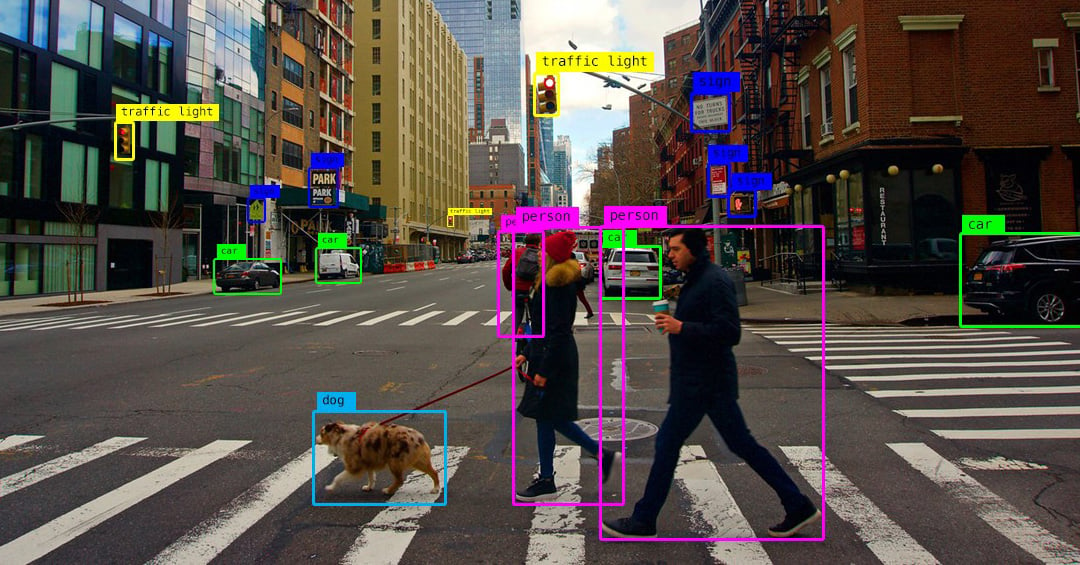

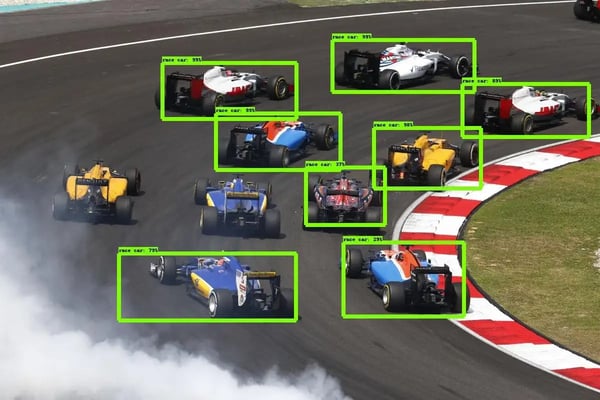

Object classification: devices that categorize particular objects among thousands of choices from a photo or video.

Object identification: using systems that detect a particular object from a bank of images.

Object tracking: based on programming that is guided by certain search criteria to find objects and then track their movements.

How does computer vision work?

Computer vision systems work based on the process our brains follow to visualize what is around them: relying on patterns to decipher specific objects.

In technical terms, what a computer does is interpret a given image not as a whole but as a set of pixels with a fixed value of colors that are represented by numbers. When you deliver this image to software, it will see these numbers, and a computer vision algorithm will process them as required.

In practice, what happens is that a computer is fed a database of images of a particular item or subject. It then goes about identifying patterns in those images, labeling what it sees, and forming a model of the item or topic in question. From what is cataloged, it will be able to accurately identify whether subsequent images or videos it receives belong to that category.

We can compare computer vision activities to the way people put together a jigsaw puzzle. We identify the pieces that make up the image, their edges, and possible combinations in the same way that computer vision neural networks study and assemble the pixels that make up an image.

One of the biggest strengths of computer vision today is Machine Learning. This branch of artificial intelligence is capable of feeding a computer enough data about the context of a certain image. Eventually, algorithms will allow the machine to observe the data on its own and teach itself to distinguish one image from another.

Thanks to advances in this field, today, artificial intelligence systems implement computer vision in:

- Pattern detection: to recognize colors, silhouettes, and repeated shapes in images.

- Image classification: to categorize pictures as programmed.

- Image segmentation: to examine the different pieces and components of an image.

- Feature matching: to detect similar patterns in images and group them together.

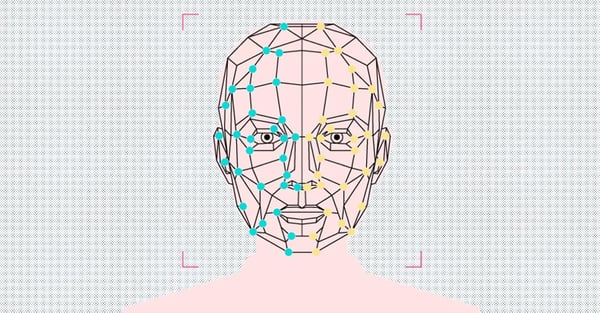

- Face recognition: to identify human faces as well as specific individuals.

The evolution of Computer Vision

Computer vision's first steps date back to the 1950s, when it was mainly used to interpret a text by hand and typewriters. The major difference between today's computer vision and the past is that the old computer vision required too many human hands and coding to work.

Before the advent of Machine Learning and Deep Learning, several people had to perform the most basic tasks for a computer to identify images. For example, a facial recognition task requires the following steps:

1. Capture individual images of all subjects to be tracked in an accessible format and manually store them in databases.

2. Enter key information for each image to define the unique features of every subject to be identified, such as lip-to-nose distance, nose size, or eye distance.

3. For comparison purposes, capture new images from videos or photographs.

4. Repeat the process of measuring and entering key information into the new captures manually, of course.

After this work of several days, a computer could compare the different images available, not without a considerable margin of error.

Now, with the advent of Machine Learning, developers only have to program applications to identify specific patterns in automatically loaded images. After that, they use statistical learning algorithms that classify those patterns and detect certain elements in them.

Deep Learning, on the other hand, is based on neural networks that receive categorized examples of specific information. In this way, they are able to extract common patterns among the data provided and convert them into equations that, in the future, will allow accurate comparisons.

Today, deep learning facilitates much more accurate face recognition by taking a previously trained algorithm and giving it samples of the faces of subjects to identify. On top of that, these networks gradually become capable of detecting faces on their own, thanks to the multiple examples provided.

If you want to know more about how this technology works, read our article: Everything you need to know about Deep Learning: the technology that mimics the human brain.

Computer vision application

Now that we know the basic workings of this powerful technology, let's explore how computer vision is applied in many areas of today's world:

Facial recognition

As we have already seen, facial recognition is perhaps the best-known use of this branch, with algorithms that detect facial aspects in images and compare them with databases containing profiles of individuals.

Facial recognition covers, of course, the detection of suspicious subjects and criminal activity, but it is also used by social media companies such as Facebook to recognize individuals in photographs and tag them. It is also a favorite of banking apps that ask their users for biometric authentication before they can access them.

Augmented reality

Augmented reality apps rely on computer vision to detect physical objects in real-time and complement them -or replace them- with virtual elements within our physical environment.

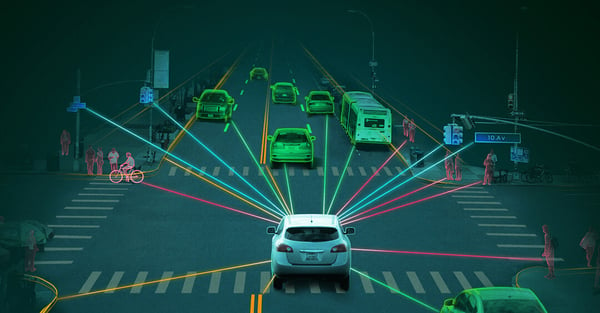

Self-driving cars

Computer vision allows autonomous cars to see their surroundings through cameras that record images and videos from different angles. They are then sent to specialized software that further processes these images to locate anything to look out for, from road signs and pedestrians to other cars.

Health

Visual data are key in medical diagnostics--X-rays and mammograms, to name a few--and, for this reason, it is more necessary than ever to automate them through computer vision. Image segmentation, in particular, facilitates detailed organ analysis and is of great help in capturing tissues containing cancer metastases.

Agriculture

More and more agricultural companies are using computer vision to solve challenges such as nutrient deficiencies or the presence of pests. The tools are images processed through drones, satellites, or airplanes.

Content management

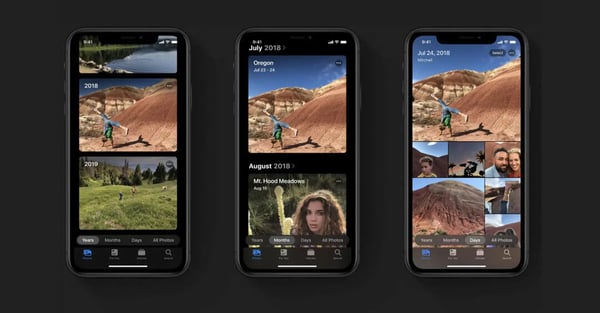

Many photo galleries installed on cell phones help us to structure our virtual content by accessing our photo collections and adding automatic tags. This way, it will be much easier to search for specific content or to have well-organized collections.

The future of computer vision and AI

The union between computer vision and Artificial Intelligence is promising and significantly improves the performance, analytical capacity, and accuracy of the latter within the multiple sectors in which it operates.

The main reason for integrating computer vision into AI mechanisms is to create models capable of visualizing situations from all angles and, above all, without human intervention.

In the future, we are sure to witness significant advances in fields such as robotics. In this case, the "eyes" of Artificial Intelligence make it possible for robots to learn about their surroundings and coordinate this deep insight with their daily tasks. An example is that robots in the retail and logistics industries can now handle extensive inventories and recognize every item in them thanks to thorough training in computer vision.

Another exciting development is already happening in the world of security and video surveillance. Video surveillance systems and their cameras now provide a much sharper perception of the environment and what is happening in it. At the same time, computer vision helps the cameras to recognize the different objects present and, consequently, watch out for civilians and differentiate them from suspicious individuals, monitor changes in traffic lights, monitor access to restricted areas, and much more.

Last but not least, we have valuable additions to drones, devices that can recognize different kinds of objects and provide information about them without even being near them. Their aerial views inform us about what is happening in parking lots, agricultural fields, and busy areas.

These are just samples of what an unstoppable technology like Artificial Intelligence combined with one of its most powerful tools can offer us.

Learn more about how computer vision technologies work with AI in our articles Vehicle Tracking: Why License Plate Recognition (LPR) is NOT Enough and Object Recognition in Security: Everything You Need to Know.