What is autonomous artificial intelligence?

Artificial intelligence (AI) is a field of computer science that enhances collaboration between humans and machines. AI has the ability to perform highly analytical, responsive, and scalable tasks automatically and efficiently. Autonomous artificial intelligence is the advancement of this technology, where an autonomous system executes various actions to create an expected outcome, without further human intervention.

In this article, we will review how AI and autonomous AI work, why they are so important, and how we apply this technology in our daily lives.

Table of contents

- What can autonomous artificial intelligence do?

- How does autonomous artificial intelligence work?

- What is the impact of autonomous artificial intelligence?

- 4 types of artificial intelligence

- Weak AI vs. Strong AI vs. Autonomous Artificial Intelligence

- What is Machine Learning?

- What is Deep Learning?

- Benefits and Risks of AI and Autonomous AI

- 4 industries that benefit most from autonomous AI

- AI Glossary

What can autonomous artificial intelligence do?

Autonomous artificial intelligence takes the capabilities of AI to the next level.

Through highly calculating, cooperative, and self-reliant machines, it solves many of the most challenging problems in critical industries. Autonomous artificial intelligence can work without human intervention in specific tasks to accelerate and improve detection, recognition, and response in sectors like law enforcement, banking, retail, and industrial operations.

Autonomous artificial intelligence applications will not replace human labor. Instead, humankind will form new collaborative spaces to work with this technology as a peer. We call it the machine-colleague experience.

If you want to learn more about the history of Artificial Intelligence, Machine Learning, and Deep Learning, see our full article here.

How does autonomous artificial intelligence work?

Traditional artificial intelligence systems work by ingesting great amounts of labeled data to detect, organize, and create certain outcomes like correlations and patterns, predictions, or automatic responses. For example, a video analytics system has to review millions of examples of a car to learn how it looks. It is usually fed and labeled by data analysts and AI engineers.

Learn more about Data Science and how it works with AI in our full article here.

Autonomous artificial intelligence is a technology that leverages artificial intelligence functionalities to their maximum capacity, allowing for a faster and more effective response in tasks like object detection, behavior analytics, autonomous tracking, and scalable responses to events. Autonomous AI orchestrates different tasks across various AI algorithms, allowing it to complete and follow through tasks under time-stressed scenarios. Autonomous AI brings together all the potential of each artificial intelligence algorithm it interacts with to produce better results.

This allows human operators to delegate repetitive tasks and focus on better strategic approaches to particular issues.

What is the impact of autonomous artificial intelligence?

Autonomous artificial intelligence will increase the profits and benefits, that AI has already produced in various global industries.

From helping shipping companies predict arrival times to teach scientists how to treat cancer more effectively; or to governments being able to detect crimes faster, artificial intelligence (AI) has profoundly impacted worldwide.

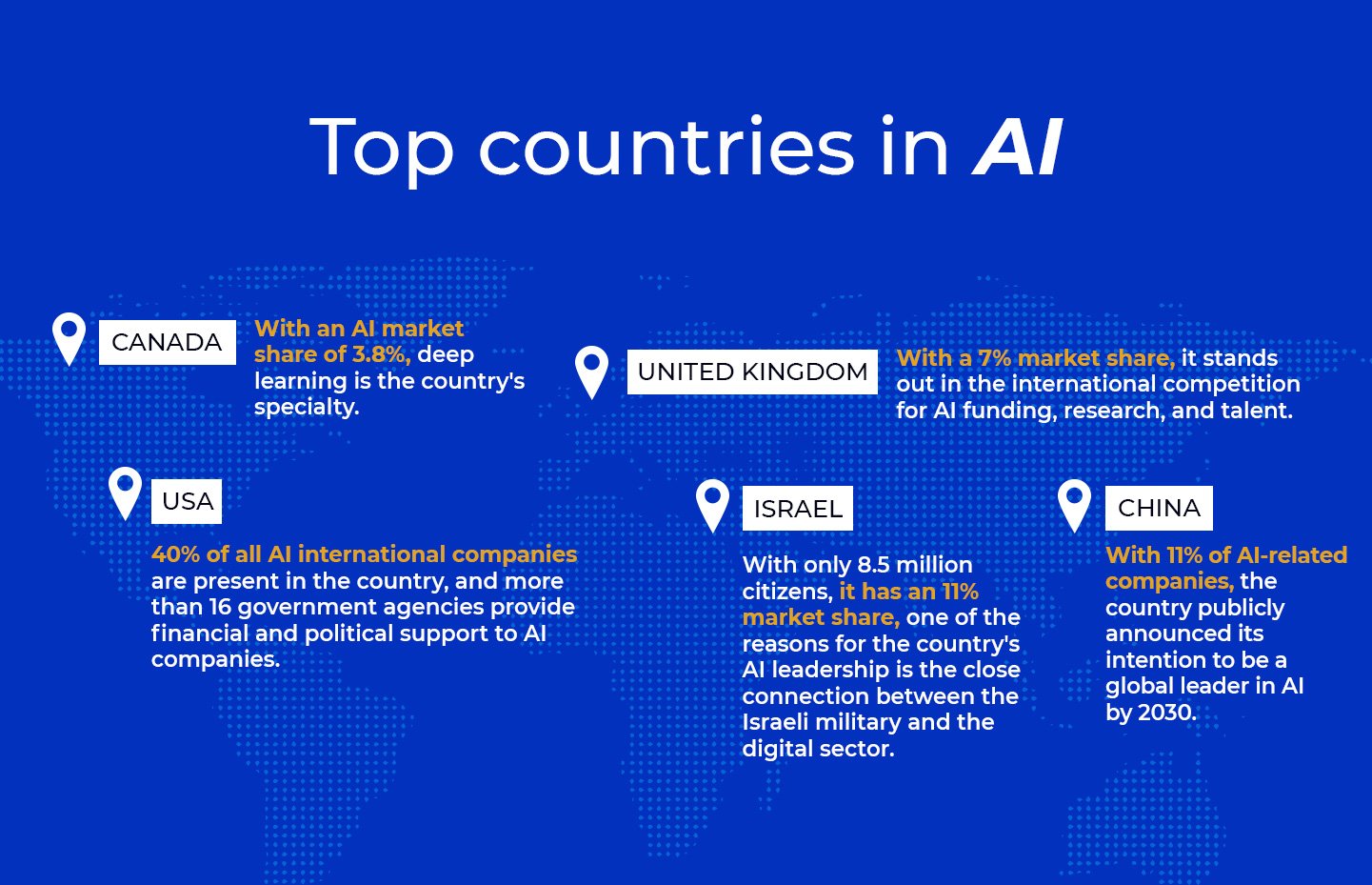

Artificial intelligence has been one of the main drivers of technological innovation in recent years. Currently, more than 30 countries have been developing national strategies to improve their AI positions, according to the US think tank Information technology and innovation foundation (ITIF).

The global AI market revenue, including software, hardware, and services, is estimated to grow 18.8% in 2022 and remains on track to break the $500 billion mark in 2024 worldwide.

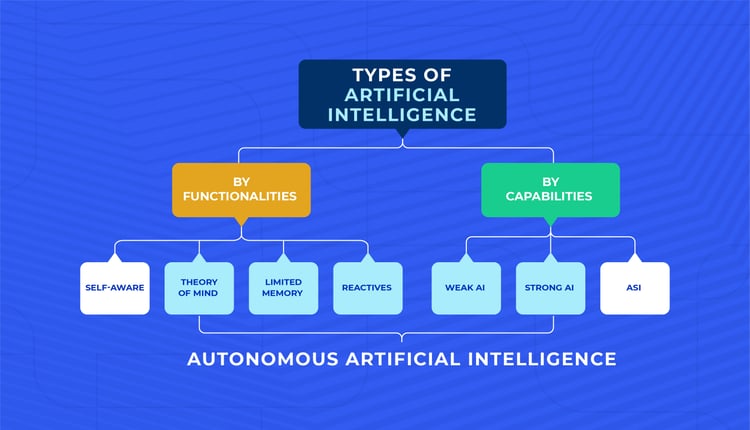

4 types of artificial intelligence and autonomous AI

“... intelligent systems are able to handle huge amounts of data and make complex calculations very quickly. But they lack an element that will be key to building the sentient machines we picture having in the future”.– Arend Hintze

In a 2016 article, Arend Hintze, former assistant professor of integrative biology and computer science and engineering at Michigan State University, explained that AI could be divided into four categories.

Hintze starts with task-specific intelligent systems widely used today to later progress to sentient systems that do not exist yet. We can find autonomous AI at an intermediate point between these technologies and the next generation of AI.

Type of AI #1: Reactive machines

This type of AI performs specific tasks according to inputs received. Rather than engaging with the world through an all-encompassing perspective, its vision is focused on rating the best outcomes to complete the tasks it has been programmed for. It has no memory nor perception of time, just a sharp focus on its objective.

Deep Blue, the IBM chess software that defeated Garry Kasparov in the 1990s, is an example. Deep Blue can recognize pieces on the chessboard and make predictions, but it can't utilize past experiences to influence future ones since it has no memory.

Type of AI #2: Limited memory.

This AI can look into a past version of a representation of the world to retrieve certain information. In other words, it can recall and form memories over a specific period of time to inform future decisions.

We can see some examples of this technology in our daily lives. Some of the decision-making functions in self-driving cars are designed this way, and websites collect users’ navigational information to suggest relevant information or products.

Type of AI #3: Theory of mind

This type of AI is an important divide between the technology we already have and the technology to come in the next decades. In addition to forming representations of the world, these machines understand emotions on a social level.

This type of intelligence makes societies possible, allowing for agreements and meaningful interactions. Its capacities would include the ability to infer human intentions and predict behavior. Some robots like the Kismet robot head and the humanoid robot Sophia can recognize faces and respond to interactions with her own facial expressions.

Type of AI #4: Self-awareness

The last type of AI can form representations about themselves. These would include recognizing their internal states and predicting how others may feel. In other words, they have consciousness.

This kind of AI is far from being created. Before that, researchers and engineers will have to understand human memory, learning, consciousness, and how to build machines that have those qualities

What type of AI is autonomous artificial intelligence?

Autonomous artificial intelligence is an advancement in current AI technology, although its AI cannot develop an awareness of its environment or itself as humans do. But autonomous AI can make its own decisions in various emergencies or is highly critical. Autonomous AI orchestrates the different technologies needed to solve a problem in any of these cases and uses the most relevant ones at its discretion, based on parameters set by humans.

Autonomous AI is a bridge between present technology and what technology will be like in the future.

Weak AI vs strong AI vs Autonomous Artificial Intelligence

Besides categorizing AI by its functionalities, this technology can also be divided into its capabilities, which are the abilities that produce an outcome.

AI can be clustered into three categories within this definition: weak AI, strong AI, and artificial superintelligence. As we previously stated, autonomous AI is a bridge between today’s technology and future technology, mutating into an even more powerful technology in the upcoming years.

AI can be categorized as:

Weak AI

Also known as narrow AI, it is an AI system designed and trained to complete a specific task. Industrial robots and virtual personal assistants, such as Apple's Siri, use weak AI.

Strong AI

Also known as artificial general intelligence (AGI), it describes programming that can replicate the cognitive abilities of the human brain.

Strong AI has yet to be realized by AI researchers and scientists. They'd have to figure out a means to make machines conscious and program them with a full set of cognitive abilities to succeed.

Machines would have to take experiential learning to the next level, having the ability to apply experiential knowledge to a wider range of situations rather than merely boosting efficiency on single activities. A strong AI program would be able to pass the Turing Test.

Artificial Superintelligence

Artificial superintelligence (ASI) is a hypothetical AI that does more than mimic or understand human intelligence and behavior; ASI is when computers become self-aware and exceed human intelligence and ability.

ASI would theoretically be superior at everything we do, including math, science, athletics, art, medicine, hobbies, emotional connections, and everything else, in addition to reproducing human intelligence. ASI would have a better memory and process and analyze information and stimuli more quickly.

Capabilities of Autonomous Artificial Intelligence

Autonomous AI capabilities fall between weak AI and strong AI, breaching a gap between the state of today’s AI and where it will be in not such a distant future. While autonomous AI’s abilities allow it to excel in specific and narrow tasks, it also can combine and choose how to connect different technologies to achieve an expected outcome. It’s still far from thinking like a human, but it can be an invaluable peer in specific situations.

What is Machine Learning?

Machine Learning (ML) is most typically utilized when firms install artificial intelligence systems nowadays. Both phrases, AI and ML, are often used interchangeably and ambiguously. Machine learning is an artificial intelligence area that allows computers to learn without being explicitly taught.

Machine learning (ML) is a subfield of artificial intelligence that allows machines to learn without being explicitly programmed. The majority of artificial intelligence in this category is paired with big data. Applications that make suggestions, such as Netflix or Spotify, use this approach.

Learn more about Machine Learning (ML) and how it works in detail in our article Machine Learning: What is ML and how does it work?

What is Deep Learning?

Deep Learning (DL) is a type of machine learning that trains a computer to perform human-like tasks, such as speech recognition, image identification, or predictions. The computer is prepared to learn by recognizing patterns using many processing layers.

Using deep learning, a computer model learns to execute categorization tasks from images, text, or sound. Deep learning models can achieve cutting-edge accuracy, sometimes even outperforming humans. A vast set of labeled data and neural network topologies with multiple layers are used to train models.

Click here and learn more about how Deep Learning works and its impact worldwide today.

Benefits and risks of AI and autonomous AI

Something is clear at this point: artificial intelligence will keep evolving, far beyond our current expectations.

It has already altered the way we consume media, communicate with one another, and overall experience the world around us. However, as with every new technology that grows and becomes widely available, there are benefits and risks involved in its use. Here are some of them:

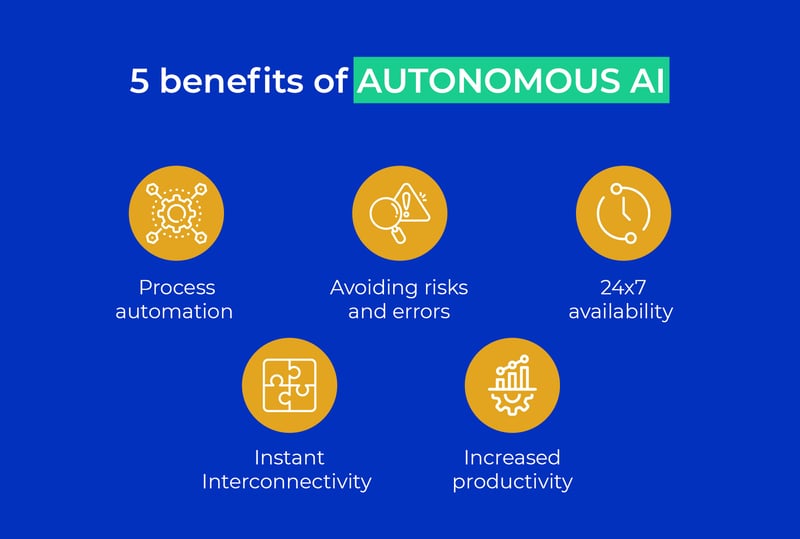

5 Benefits of AI and autonomous AI

1. Process automation: One of the most immediate benefits of this technology is its ability to take over repetitive tasks, which can be accelerated with the right machinery.

2. Avoiding risks and errors: One of the main qualities of AI is its accuracy. Human operators cannot take 100% responsibility for their actions all of the time. Machines can, and they can optimize otherwise.

3. 24x7 availability: Another major quality of AI over humans is their availability. The fact that a machine can keep working while we rest makes it very necessary.

4. Instant interconnectivity: In an increasingly connected world, AI and autonomous AI help speed up communications and the passing of information not only between devices but also between individuals.

5. Increased productivity: The capabilities of AI and autonomous AI make it possible to clearly detect areas of opportunity for each business, and anticipate future events that alter the pace of business production.

5 Risks of AI and autonomous AI

1. Prejudice and discrimination: It is becoming increasingly clear that AI is linked to many human social prejudices. This largely has to do with the registration of data from certain areas of the population compared to others. This is a risk that needs to be addressed in developing AI technologies.

2. Policy implications: The scope of AI technologies may put sensitive information from various regions in the world at risk. So it is important to create security procedures and even regulatory apparatus to avoid such damage.

3. High costs: Several companies' investment in artificial intelligence is usually very high. As time goes by, the price for implementing these technologies will decrease, but it is essential to create greater opportunities so that as many people can use them as possible.

4. Lack of regulations: The lack of understanding about the operation and scope of this technology means that third parties or other parties are often affected and are not protected by the law in case of violations to their security or privacy due to this type of technology.

5. Media representations: Many of the representations in the popular culture of AI alter the perception of what this technology does. Having more information outside of these representations will help engage in more fruitful discussions about AI.

4 industries benefitted from AI

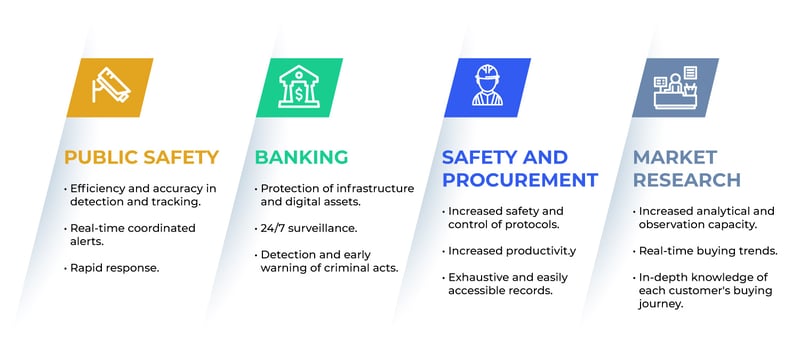

1. Public Safety

Artificial intelligence advances in public safety will transform the sector forever.

Video surveillance devices will cease to be tools; instead, they will be able to act in real-time and make decisions in conjunction with human operators. Officers will concentrate on attending to the emergency or case at hand rather than dealing with the manual and unfruitful tasks of monitoring and tracking vehicles.

Learn more about our autonomous AI solution for law enforcement here.

And get the whole scope of how AI has impacted surveillance in our full article here.

2. Banking

AI will transform the financial industry, especially in security outside and inside banking facilities.

While cybersecurity has become the main issue for several AI solutions, our new autonomous AI solutions will help provide a secure experience for customers, protecting them from theft attempts and even alerting them about any suspicious behavior in real-time.

3. Safety and procurement

Using powerful computer algorithms, artificial intelligence allows procurement firms to tackle complicated challenges more efficiently or effectively. From spend analysis to contract administration and strategic sourcing, AI may be integrated into a variety of software applications.

Most of the outcomes companies seek when using AI are to increase efficiency, favor quality, accelerate processes and optimize operations, favoring companies to increase sales and minimize errors. The autonomous AI applications that will be developed for the industry in the coming years will be responsible for amplifying these benefits.

4. Market Research

One of the benefits of employing AI in market research is that it dramatically reduces its time to create an overview of customer desires. AI solutions produce information in a matter of seconds that used to take marketing teams days or even weeks. Early adopters of these technologies are already reaping economic benefits, and autonomous AI developments in the industry will further increase profits.

Companies can continuously study the market using automation, information creation, and natural language processing rather than spending time, labor, and money on these tasks. Autonomous artificial AI will free humans from more repetitive, low-impact tasks.

Learn more about how our autonomous artificial intelligence solutions can work for your organization. Just click here, leave us your contact information, and we will show you all the possibilities you can achieve with our revolutionary technology.

AI Glossary

Would you like to understand more about artificial intelligence but do not know where to start? You're in the right place!

We know that it is challenging to understand artificial intelligence. Even more so if you do not know what the words used in AI discussions mean. So here are some essential terms to know about AI.

A/B Testing

A statistical method for comparing two (or more) variants or approaches of a system or a model. The goal of A/B testing is to determine whether the approach works better and if the difference is statistically significant.

Activation Function

A function that takes the weighted total of all the inputs from the previous layer and provides an output value to ignite the next layer in Artificial Neural Networks (for example, ReLU or sigmoid).

Algorithm

An unambiguous description of a process, A collection of mathematical instructions or rules that can execute computations, handle data, and automate reasoning to solve a class of issues.

Artificial Intelligence

Technology that fosters collaboration between humans and technology by automatically performing highly analytical, responsive, and scalable tasks.

Artificial Neural Network (ANN)

It is a subset of machine learning and the heart of deep learning algorithms. Their name and structure are inspired by the human brain, mimicking how biological neurons signal to one another.

Artificial General Intelligence (AGI)

It is the intelligence of a machine that could perform a task in the same way as the human brain and is a topic of great interest to science fiction writers and researchers.

Area Under the Curve (AUC)

A methodology used in Machine Learning to determine which one of several used models have the highest performance.

Autonomous Artificial Intelligence

The next step in the development of AI technologies. An application that enhances the capacities of detection, recognition, and response of AI solutions in high criticality areas.

Batch

The set of examples used in one iteration of model training.

Big Data

A big amount of structured and unstructured data, usually too complicated for standard data processing software to comprehend and categorize.

Black-box algorithms

Any artificial intelligence system whose inputs and operations are not visible to the user is an impenetrable system in a general sense.

Bounding Box

The smallest box fully containing a set of points or an object used in computer vision applications.

Classification

Approximating a mapping function from input variables to discrete output variables.

Clustering

Especially during unsupervised learning, grouping related examples. After all of the instances have been sorted, a human can optionally give each cluster meaning.

Computer Vision

It is a subfield of machine learning that teaches computers to 'see' and understand the content of digital images.

Learn more about how computer vision works with artificial intelligence in our article here.

Convolutional Neural Networks

Deep Learning algorithm that can take in an input image and evaluate and distinguish the importance of various aspects in the picture.

Decision Tree

Obtaining an understanding of data by considering samples, measurement, and visualization.

Computer vision

A category of Supervised Machine Learning algorithms where the data is iteratively split in respect to a given parameter or criteria.

Deep learning

It is a type of machine learning that trains a computer to perform tasks as humans do, such as speech recognition, image identification, or making predictions.

Generative Adversarial Networks (GANs)

Algorithmic architectures that use two neural networks, pitting one against the other to generate new, synthetic instances of data passing for actual data. They are used in image, video, and voice generation applications.

Machine learning or ML

The usage and development of computer systems that can learn and adapt without having to be told what to do.

Natural Language Processing

A specialization within AI that manages the understanding and creation of verbal and written language.

Recurrent Neural Networks (RNN)

Their goal is to mimic how humans naturally learn and use their experience to read situations—allowing information to persist and be transmitted.

Reinforcement learning

It is the training of machine learning models to make a sequence of decisions. The agent learns to achieve a goal in an uncertain, potentially complex environment.

Semi-supervised learning

It's a method of developing artificial intelligence in which a computer algorithm is trained on labeled input data for a certain output.

Supervised learning

It's a method of developing artificial intelligence in which a computer algorithm is trained on labeled input data for a certain output.

Training data

A dataset for training, tuning, and improving AI models.

Transfer learning

A deep learning approach in which programmers reuse a neural network developed for one goal and apply it to a different domain to address a different problem.

Turing Test

A test for a computer's intelligence requires a person to differentiate the machine from another human using the responses to questions posed to both.

Unsupervised learning

Artificial intelligence algorithms used to find patterns in data sets that contain data points that aren't classed or labeled.

If you want to learn about the impact of Machine Learning, in your day-to-day life, read our article 5 Machine Learning examples.

Discover what our autonomous artificial intelligence can do for you and your company.